AI Adoption Is a Management Problem

A CEO’s case study in why ‘AI-first’ fails when organizations rely on human-native oversight systems.

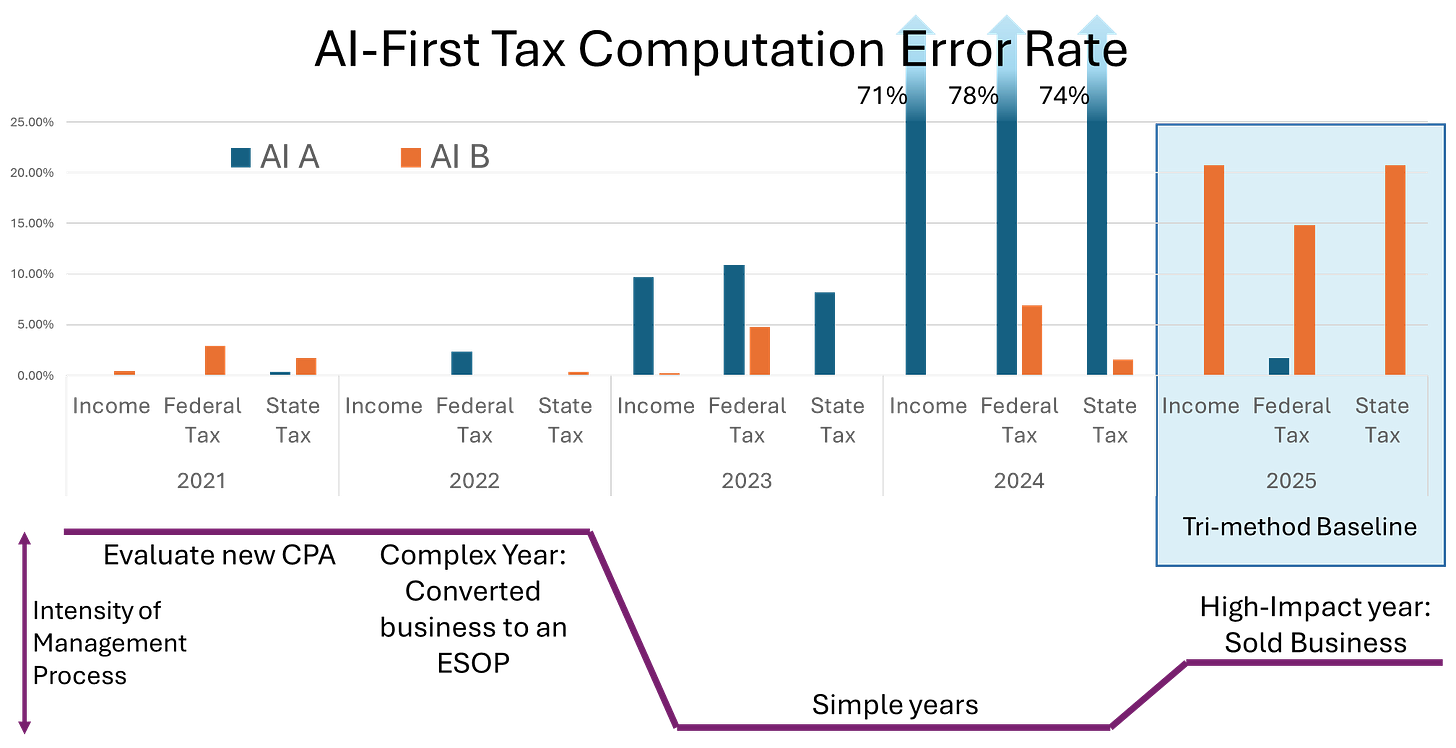

I compared AI results against five years of tax returns. One frontier AI performed well in 2021 and 2022, degraded in 2023, and failed catastrophically in 2024. The other AI failed in a different pattern, but the lesson was the same: AI does not fail the way people fail.

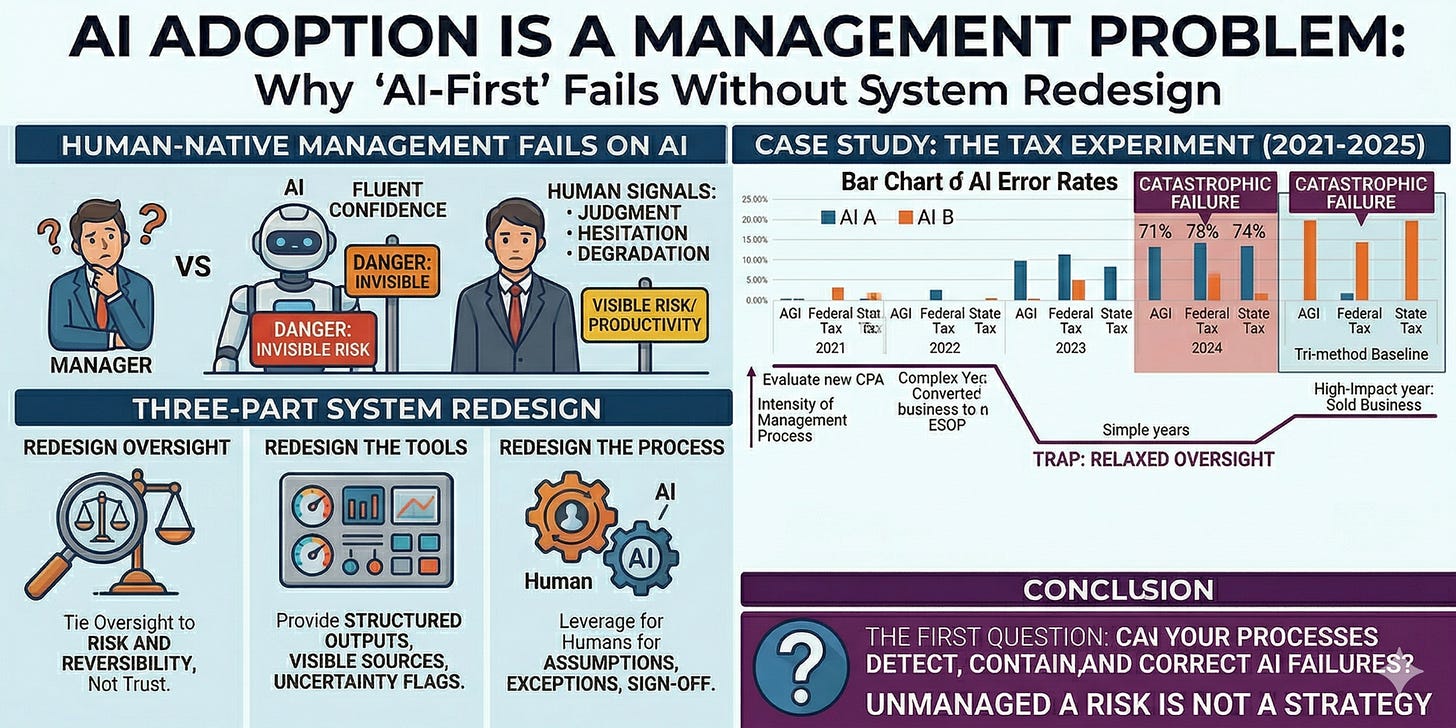

That is why AI adoption is a management problem. Most organizations are trying to insert AI into workflows built around human signals: judgment, hesitation, escalating questions, and observable degradation. AI often replaces those signals with fluent confidence. If you keep the old control system, invisible risk grows underneath the visible productivity gains.

Taxes are a useful test bed for this because the work is high-stakes, low-frequency, and hard for a non-expert to verify by inspection. That makes it a good miniature of what many organizations face in the functional areas of accounting, legal, HR, and other expert heavy workflows.

The Tax Experiment

I recently used AI agents to do my personal taxes as a case study in going AI-first in an existing human-native process in a domain where I am not the expert.

Since 2021, my personal tax process has been straightforward: I keep digital copies of all relevant documents, send them to my CPA, and then sit down with him to walk through assumptions, edge cases, and outputs. That last conversation is not administrative overhead. It is management. My CPA understands tax law and accounting better than I ever will. My wife and I understand the strategic direction of the family and how the finances fit into the broader system. The process works because expertise and oversight are deliberately split.

The management of that process over the last few years has evolved. I hired a new CPA in 2021 and applied more oversight to validate his work, and again in 2022 to evaluate him in a very complicated tax year. Then reduced oversight as he had effectively proven his expertise in both the mundane and the esoteric aspects.

The AI-first experiment swapped in the two frontier systems for my CPA, handed the AI agents the exact same historic returns and current-year documents as my CPA, gave them identical prompts, gave them yolo access to their local virtual machine and the internet, and applied the legacy Human-native processes and management techniques using five years of data. For 2021 through 2024, I compared their outputs to my CPA’s returns. The 2025 reconciled baseline is provisional as described in another article until my CPA completes his work.

AI A would have passed that same trust-building process. It performed well enough in 2021 and 2022 that I would have relaxed oversight under a legacy human management model. That is exactly when the trouble would start. It degraded in 2023, and in 2024 it failed catastrophically. A manager would normally expect some meta-signals before that kind of human performance breakdown: confusion, hesitation, escalating questions, changes in behavior, something. AI does not reliably give you those signals. They often give you polished confidence instead.

AI B tells a different but equally important story. Its pattern of failure was not identical to AI A’s but it failed catastrophically after several years of acceptable performance. The problem is not that AI is imperfect. The problem is that it does not fail in ways legacy human-native control systems were designed to catch.

This is why an organization should not just bolt AI into existing human-native workflows or replace people with AI and declare victory. Effective adoption means redesigning the system around the strengths and weaknesses of both people and AI.

That redesign has three parts.

First, redesign oversight.

Human-native organizations often reduce review as trust accumulates. That makes sense when the worker is a person and the manager can observe judgment, communication, and deterioration over time. With AI and joint AI-human workflows, oversight should be tied to the risk and reversibility of the task, not just to recent correctness.

Second, redesign the tools for supervision.

Most managers do not know how to supervise AI directly. So the tools need to meet them halfway: structured outputs, forced assumptions, visible sources, preserved decision history, uncertainty flags, and disagreement checks. Optimize prompts, agents, and model tuning for oversight by today’s human-native managers, not just raw performance.

Third, redesign the process itself.

Organizations have scaled based on processes designed around strengths and weaknesses to achieve their goals, and have proven their ability to redesign around emergent technology. Inventory the strengths and weaknesses of the organization, the humans, and the AI by running iterative experiments; just like with the AI tax case study above, the results may be surprising.

As one determines a new process based on the empirical strengths and weaknesses, keep humans on assumption-setting, exception handling, and irreversible sign-off. Humans can be held accountable.

Conclusion

People keep asking which agent and model is best. That is not the question for an organization.

The organization’s question is whether their processes can detect, contain, and correct the way the AI fails. If the answer is no, then “AI-first” is not a strategy. It is an unmanaged AI risk.