The AI Governance Trap

A CEO case study in managing AI-native processes when “right” is subjective, and being wrong has legal downside.

I’ve been running a bunch of research side quests trying to no-kidding understand how one would build and manage an AI-native organization outside of just the typical software engineering. There’s been a ton of speculation about which of an organization’s knowledge jobs are “susceptible” to AI. If we assume they’re right, then the harder question for leaders is more practical:

How do you manage AI-first output in domains where there’s no measurable “right answer,” no test suite like the vibe-coders can empirically build, and the downside for being wrong can be real legal penalties?

This post is a case study from finance: I went AI-first on my personal taxes so I could stress-test how I would manage AI-native processes in domains where I can’t just run unit tests and move on.

The CEO Problem

One of the CEO challenges is that you’re on the hook for activities of the business even when they are outside your specialty. You can hire domain experts, but it’s still often your signature on high-stakes work products and it’s you who gets the court summons.

When I managed humans outside my domain expertise, my default playbook was:

Routine briefings to monitor for changes or lack of changes

Spot checks to deep-dive into details (numbers, assumptions, edge cases)

Looking for “confidence gaps” where the briefer’s body language showed uncertainty

Sending people back for collaborative homework when there’s an assumption mismatch between parts of the business

That works with people because you can dig into the work product, the person’s reasoning, and the non-verbal communication. With AI, the “reasoning” sounds airtight even when the output is wrong.

So the question became: coding agents get a test suite… what do finance agents get?

Why taxes are a useful stress test

In my early days, I did too much myself. I ran the books, maintained the cashflow model, built cost proposals, and handled AR/AP, etc. until I could afford to onboard a professional. In short: I was the worst CFO/comptroller I ever had.

I know enough finance to be dangerous, which makes it a great place to explore an AI-native workflow: I can understand the narrative and spot obvious mistakes, but I can’t certify the fine print.

Taxes were the perfect “non-engineering” task because:

There’s no clean pass/fail harness that I can use as a non-expert

Subjective policy interpretation matters and cascades (it’s not just math)

If you get it wrong, your signature carries consequences

The experiment

I’m anonymizing the models. This is a process evaluation, not a model bake-off.

I gave a frontier closed-source model (Model A) agent access to past-year returns and the same organized raw information for 2025 that I gave my CPA (who is awesome, hi Brad!). I had the AI agent draft a return and walk me through the results. In thinking mode, it produced output that had good vibes.

But I wasn’t confident enough to sign it.

So I asked Model A to conduct its own “IRS-style audit,” categorize risks, and tell me what it was least certain about. The output felt like managing someone who projects total confidence all the time, which is exactly when your finely tuned BS detector goes off.

My wife’s approach (as a US government senior leader, her job is broader and she more often manages outside her own domain expertise) is to bring in an independent expert in these situations. I took the same approach and ran a second, independent workstream.

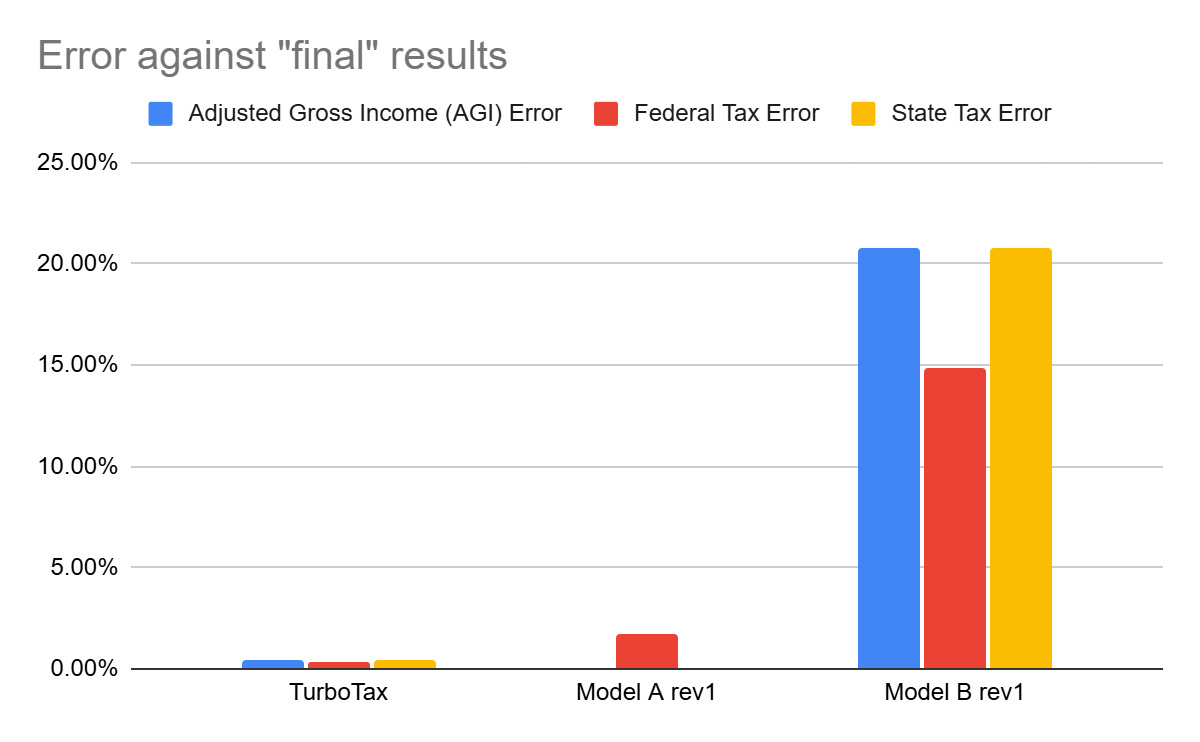

The TurboTax cross-check

I did the same return in TurboTax.

Model A and TurboTax were pretty close on the balance due… but they got there for very different reasons.

That implied something was wrong.

So I had Model A compare, contrast, and explain the TurboTax draft return versus its own return.

Aha: it immediately found a real error. I’d dropped a digit when manually entering a 1099 into TurboTax. It also listed a mess of other differences. Some were easy to explain (me doing a bad job interpreting complex 1099s). Others looked like outright differences in how ambiguous items were handled.

At that point, I didn’t trust either output. And I can’t ethically sign off that either is substantially correct.

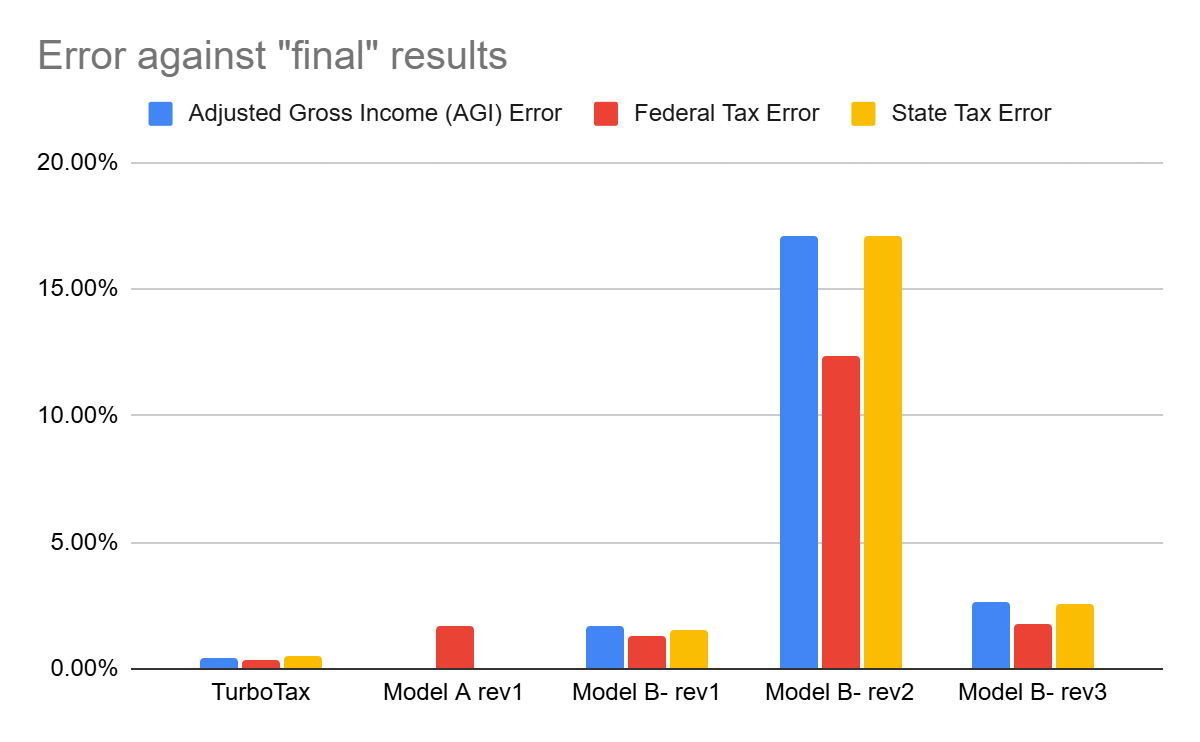

Trying a “cheaper verifier” (and why it failed)

Next I tested a mid-grade closed model (Model B-) hoping I could use a cheaper model to trust-but-verify an expensive model.

At first it wasn’t too far off. Then I asked it to self-analyze for audit risks and validate all its work, ask me questions to resolve ambiguity, and explain potential errors.

My incompetence alarm went off like a midwestern tornado siren.

The AI claimed to find an error in a Vanguard 1099 by citing a Fidelity 1099 as confirmation. It flagged Medicare wage base issues using obviously wrong assumptions. It started identifying missing accounts. It showed confusion about Treasury bills being exempt from state income tax; something I know enough to be dangerous on and to recognize when something is more dangerous than I.

CEO hat on: if this was a senior employee then I would be having one of those crucial conversations about performance. If it was a junior employee, I’d be talking to their manager to figure out whether we have a mentoring problem, a manager problem, or a process problem that let this move forward.

This is the governance trap: a weaker verifier can generate confident critique while compounding errors.

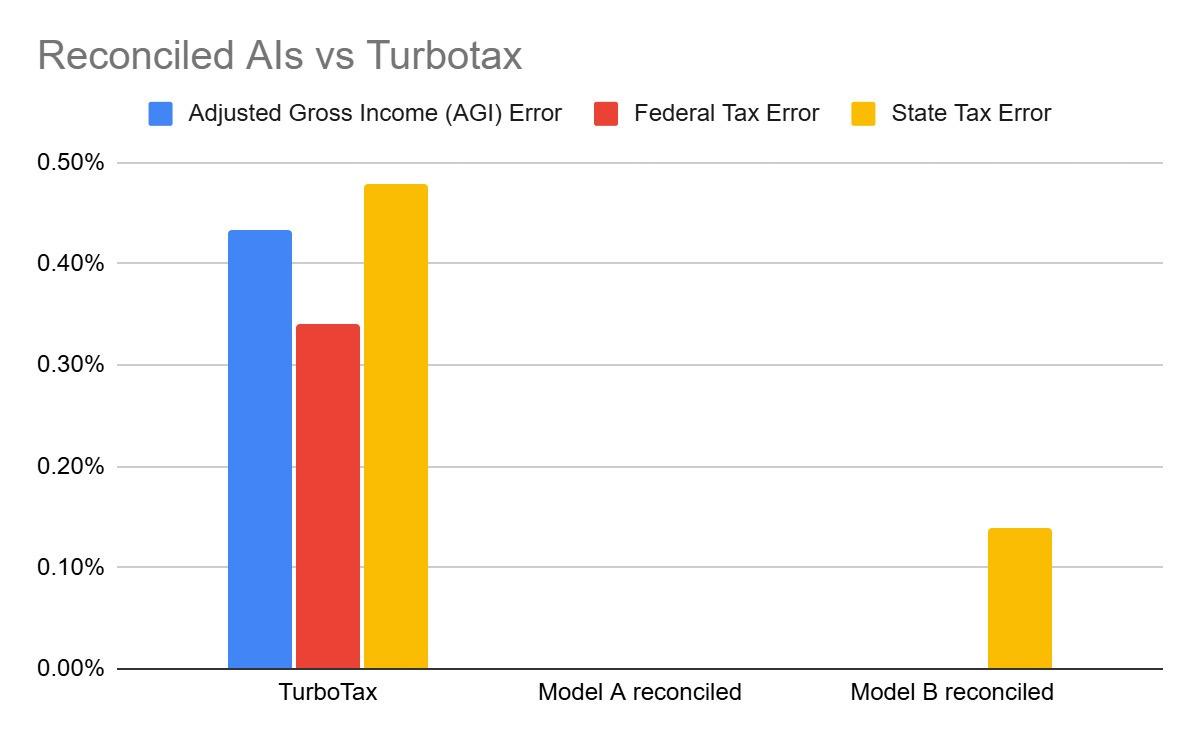

A workflow that converged

After that, I threw away the mid-grade model and used a second high-end thinking model. Its first pass was way off, which forced a pivot: instead of “AI vs TurboTax,” I ran “AI vs AI.”

I used two different frontier high-end models to reconcile against each other, and I had them produce interim work product that another AI, or a human, could actually audit. In the case of taxes, that means the full workbooks/workpapers, not just the final forms.

I gave the models strict instructions: neither output is to be trusted by default; compare, contrast, improve; and explicitly flag contradictions that can’t be resolved.

They effectively converged. Most discrepancies ended up being rounding differences, implementation details, or subtle differences rooted in tax law changes after the model B’s pre-training cutoff. The good lawyers always called this de minimis which I always translated as “figuring this out will cost more than it will save so please Mike don’t ask expensive questions like a junior engineer”.

In the end, using two AIs to check each other’s work got me closer to a coherent, explainable tax return than me trying to brute-force it through TurboTax alone. Applying the same two-AI process for future year tax planning also converged towards much better answers with fewer errors. After they self-reconciled, they identified some future year optimizations that I had not thought about and ruled out some over-optimizations which I incorrectly assumed would have been beneficial.

The conclusion I’m willing to sign my name to

Would I sign and submit the final work product from either model?

I would sign it with confidence. But I wouldn’t submit it without a knowledgeable human expert on call for a potential in-person IRS audit.

If the IRS decides to conduct an in-person audit, I need someone who can respond fast, explain the compliance, and handle the back-and-forth without waiting on fifty minutes of AI inference time. If two AIs enter the IRS thunderdome, the CPA is still the one who walks out.

Here’s what I’m concluding for managing AI-native processes in domains where there is no definitive “right answer” and no pass/fail test suite:

I need two distinct peer-level AIs to independently generate and then verify/critique each other’s work, with auditable interim artifacts (workbooks/workpapers), OR

I need a domain expert to manage the AI-native process in their domain, using AI as an accelerator but not the definitive authority.

If you’re building an AI-first org, this is the real work: designing the verification layer, and the escalation path. AI doesn’t change the accountability model. You are still accountable for the organization whether it’s AI-First or Human-First.

It’d be interesting to see how this (and other) workflows perform on past years’ tax returns. Presumably you’d have a better ground truth answer there.