LLM Tokenizers as OSINT: What U.S. vs. Chinese AI Vocabularies Reveal about Competition

As the Department of War, the Intelligence Community, and industry rapidly adopt AI, it’s worth getting clear on how closed U.S. frontier models differ from the more open Chinese models they’re increasingly competing with.

One practical way to peek inside the black box is the tokenizer which is a model’s vocabulary. The tokenizer is shaped by the input a model is expected to handle, and it can offer a rough signal about the language mix a model was optimized around (and often, what it likely saw during pretraining).

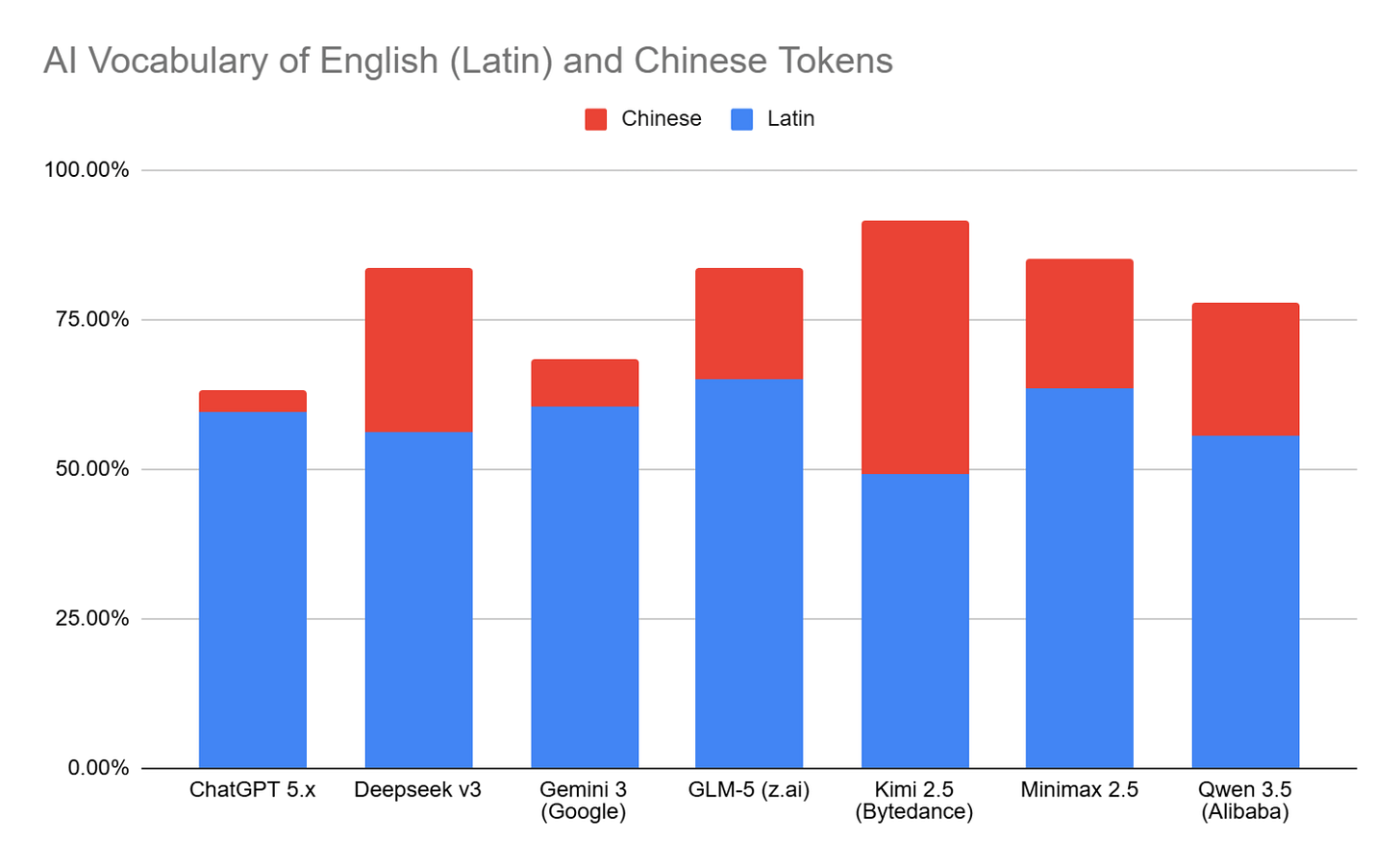

I graphed the share of several models’ [1] vocabularies that is Latin script (English) versus Chinese language. The pattern is stark: U.S. frontier models appear to allocate far less vocabulary capacity to Chinese than leading Chinese models do.

This doesn’t prove exactly what any model was trained on, but it supports three useful working inferences:

Chinese models likely have access to deeper Chinese-language training data than U.S. companies do. They will likely reason more accurately about Chinese observables. No surprise here.

Chinese models likely have access to significant English (latin) training data. They may reason equivalently about English observables.

Chinese models allocate far less vocabulary capacity to languages other than English and Chinese. U.S. Frontier models likely have a significant advantage when reasoning about the rest of the worlds’ observables. This caught me by surprise.

We’re starting to see more hidden tool-use and coding vocabulary appearing in the vocabularies. Those trends will likely help us infer what types of tools Agentic AIs were trained specifically to use and how. Likewise, if we see specific software libraries represented in the vocabulary we can infer the LLM will prefer importing them into vibe coded software and task-specific tooling; those software libraries would be prime spots for supply chain attacks that asymmetrically impact code generated by specific LLMs.

If you’re building AI for competition or conflict, bake this in explicitly. The differences won’t just be fine-tuned behaviors or deliberate alignment around safety or regulatory norms. They’ll include different priors, different blind spots, and different ways of reasoning at times.

Metehan Yesilyurt did an amazing writeup after reverse engineering ChatGPT’s tokenizer and at https://metehan.ai/blog/reverse-engineering-the-gpt-5-tokenizer-aeo-geo/. It’s worth a much longer read!

[1] Note that Anthropic and x.ai have not recently released a tokenizer from which to infer a modern vocabulary.